Will Nuclear Fusion Fill the Gap Left by Peak Oil?

Posted by Chris Vernon on January 11, 2007 - 10:45am in The Oil Drum: Europe

On the 12th. of December 2006 the UK Magnetics Society held its annual commemorative event with an afternoon seminar followed by the Ewing Lecture. This year the focus was on magnetic fusion energy.

Nuclear fusion has evoked opinions in the various energy blogs ranging from “sixty years of failure and a certain dead end”, to “the reason why we do not need to worry about peak oil”. This event was a good opportunity to gain a clearer view of what part, if any, fusion energy could play in filling the gap as oil and then gas production peak and decline.

After many years of half-hearted support there is now a surge of backing for fusion energy. Many will have heard about the agreement to built the International Tokamak Experimental Reactor ITER. Less well publicised have been the European Fast Track program and the bilateral agreement between the EU and Japan called ‘the Broader Approach’ which, amongst other things, will lead to DEMO, the first full electrical power generating reactor. From the UK side this new found enthusiasm has been in large part due to Sir David King, the Chief Scientific Advisor to the government, who may not fully accept the imminence of peak oil, but does see an energy crisis looming and has become convinced that the possibility of fusion energy is promising enough to warrant substantial investment.

The four speakers in the seminar were senior members of the staff of the Culham Division of the United Kingdom Atomic Energy Authority (UKAEA). The lecture was given by Dr. Frank Briscoe, operations director at Culham. Culham has been the centre of fusion research in the UK since 1960 when the first large scale UK fusion experiment, ZETA was transferred there from Harwell. ZETA was still there, but no longer working, when I first visited Culham from Harwell as a student in 1964. I have retained a personal interest in fusion since then.

Of particular interest to this forum were the Ewing lecture itself, entitled ‘Magnetic Fusion Energy: Progress and the Remaining Challenges’ and the seminar presentation by Dr. Derek Stork entitled ‘Scientific and Engineering Challenges of a DEMO Fusion Reactor’. Since the various contributions overlapped somewhat and some of the material was of specialised interest to those involved in magnetics, I have combined those parts of the contributions that I hope are of interest to this forum.

Dr. Briscoe started his lecture with a brief summary of how nuclear fusion works.

Fusion Basics

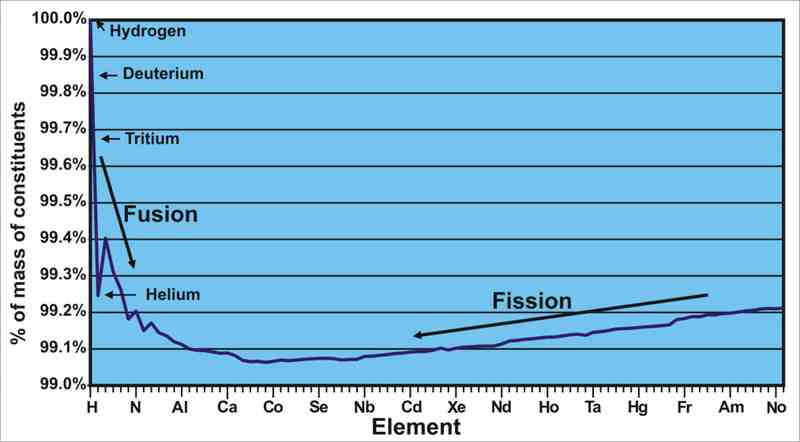

Atomic energy, both fission and fusion, exploits the fact that atoms weigh less than the sum of their parts. This is because of the energy that binds them together and is released in forming the atom from its constituent protons, neutrons and electrons and would have to be expended to rip the atom apart back to these constituent parts. The energy released in binding relates directly to the drop in mass of the atom by Einstein’s equation E = mc². This loss in mass is called the mass defect and varies between the elements. Energy can be released by either splitting very heavy atoms in two (fission) or joining light atoms together (fusion).

Mass defect: The mass of an atom of each element expressed as a percentage

of the mass of the protons, neutrons and electrons that constitute the atom.

The three isotopes of hydrogen plus the most common or stable isotope of

the other elements are shown. Click to Enlarge

The problem with trying to fuse atoms together is that, although there is a very strong force of attraction between the protons and neutrons in the nucleus when they are very close due to the strong nuclear force, this force drops away very rapidly with distance so that at slightly greater distances the electrostatic repulsion between the positively charged protons becomes dominant. To fuse the nuclei of two atoms, they have to be forced together hard enough to overcome this repulsion until they are close enough together for the force to become attractive.

Many different fusion reactions and many ways of bringing the atoms together have been considered. Far and away the front runner, in terms of progress to achieve a large scale commercial electrical power plant, is the reaction between the hydrogen isotopes deuterium and tritium when heated to a temperature such that thermal collisions between the nuclei carry enough energy to overcome the electrostatic repulsion with magnetic forces being used to confine the reactants. The temperature required to cause the reaction is in the order of a hundred millions degrees. At such a temperature the reactants are completely dissociated into a cloud of nuclei and electrons called a plasma. The plasma is far too hot to confine with material walls but because the electrons and nuclei are charged and moving they can be deflected by magnetic forces and with a suitably shaped magnetic field, confined.

The fusion reaction is deuterium plus tritium gives helium plus a neutron:

2H + 3H —› 4He + n

20% of the energy released by the reaction is carried off by the helium ion as kinetic energy (3.5Mev per ion). Since the helium is ionised it is charged and is confined by the magnetic field. In colliding with the rest of the plasma it gives up its kinetic energy, heating the plasma. If the condition called ignition can be reached this will be the only source of heat needed to maintain the plasma temperature once ignited.

The other 80% of the reaction energy will be carried off by the kinetic energy of the neutrons (14.1MeV per neutron). Since the neutron is not charged it escapes the plasma. In a reactor designed to generate power, a ‘blanket’ surrounds the plasma and the collisions the neutrons make with the material of the blanket transfers the kinetic energy of the neutron to the blanket, heating it up. This heat, plus a little gained from absorbing the hard ultraviolet/soft gamma radiation emitted by the plasma, is transferred out of the chamber by a gas or liquid and used to heat steam, to drive a turbine, which turns an alternator to generate electricity.

Deuterium exists in enormous quantities in sea water where it forms 0.03% by weight of the hydrogen but tritium exists naturally in only tiny amounts generated by cosmic rays, and decays away with a half life of 12.3 years, so that there is only about 3.6kg of naturally generated tritium at any one time distributed all around the planet. All other tritium has to be made artificially. In a commercial reactor the blanket will also perform the function of creating tritium. It will contain lithium in some form and this will react with the neutrons bombarding it to form tritium using the reaction :-

n + 6Li —› 3H + 4He

This tritium will be collected to fuel the continuing fusion reaction. Lithium 6 is a stable isotope and forms 7.5% of natural lithium. Some blanket designs require enrichment of this isotope. The tritium breeding reaction is exothermic and increases the heat production by some 20%.

At first sight these reactions appears to combine to give an overall reaction of:-

2H + 6Li —› 2 4He

However this neat cancellation requires that every neutron from the fusion reaction reacts with a lithium atom to form a tritium atom and that every tritium atom so generated is collected and fed back into the reactor and takes part in a neutron generating fusion reaction. In practice there are many loss mechanisms around the loop that mean that this path alone will not provide sufficient tritium to maintain operation. To augment the tritium production a neutron multiplier is added to the lithium. The main candidates for a neutron multiplier are beryllium and lead. Experiments have shown that tritium self-sufficiency is possible but difficult. To generate much in the way of a surplus is very difficult. Dr. Stork indicated that a tritium breeding ratio of about 1.1 was all that was expected of the designs being considered. This implies that for every 10kg of tritium fed in as fuel for the fusion reaction, 11kg of tritium will be recovered from the blanket. The magnitude of the excess tritium available is probably the limiting factor on how fast fusion energy can spread once a prototype commercial fusion reactor has been demonstrated, as discussed below.

Magnetic Confinement

As part of his seminar presentation, Dr Tom Todd gave a fascinating review of the very varied magnetic confinement systems that have been, and are being, experimented with but again by far and away the front runner in the race to practical power systems are the toriodal systems first developed by the Russians called ‘tokamaks&rsquo, from a Russian acronym. The biggest and most successful tokamak so far is the European run JET system hosted in the UK at Culham. This produced a peak of 16MW of fusion power in 1997 but many other tokamaks have been built across the world.

Critics of fusion power have categorised these experiments as so many failures because none of them have produced more output fusion power than was put in. In truth none of them were built with the intention of achieving energy break-even. All were intended to understand and develop ways of controlling the seething monster that a dense plasma at a temperature of many millions of degrees is. For the most part these experiments have, after a fair bit of modification and adjustment, reached the sort of performance hoped for. Our ability to contain the plasma at high temperature, high density and for long enough to allow a sufficient degree of reaction to take place has increased four orders of magnitude over 40 years.

This shows the progress over the years at confining a hot plasma. The fusion product

is the plasma density in particles/m³ times the time in seconds that the plasma

can be held in these conditions, times the ion temperature in degrees Kelvin.

The requirement for this to be at least 3 x 1028 for ignition to occur in a

deuterium/tritium plasma is one of the Lawson criteria formulated by JD Lawson 1955.

The best results of JET and JT-60U are close to energy break-even, Q = 1. Click to Enlarge

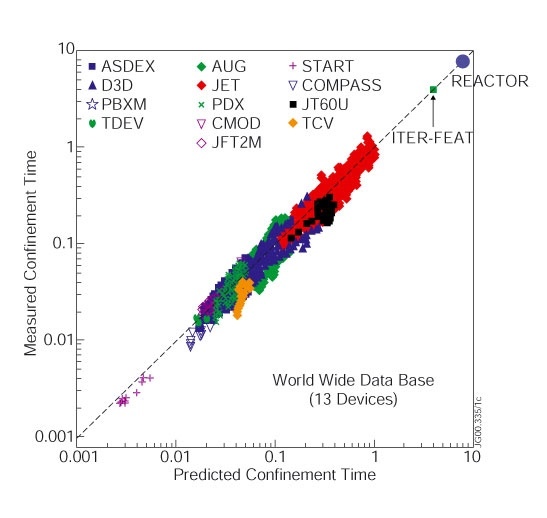

There has also been great progress in predicting the performance of a plasma device as computer power has increased, so there are now fewer surprises in new experiments.

Predicted and measured confinement time for 13 different fusion devices under a great

variety of conditions plus indications of where ITER and a commercial power reactor are

expected to operate by scaling the results of existing machines. Click to enlarge

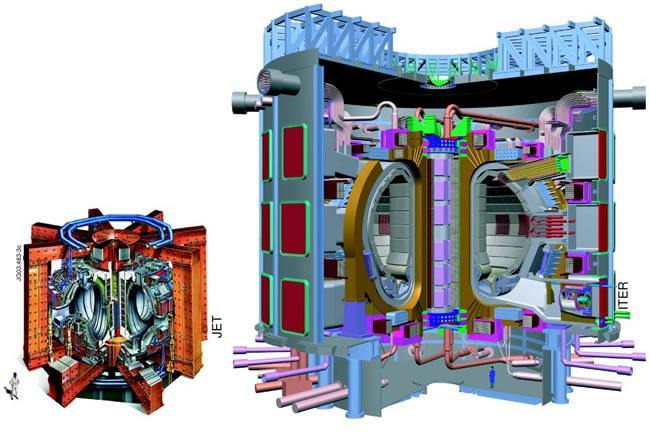

Although there is still more work to do on plasma control, we have now reached the stage where we can be reasonably confident that just scaling up the size of the reactor will produce substantially more power from fusion than is put in to create the reaction. Such a reactor has been designed in detail and the major parts have already been prototyped. On 21 November 2006, after years of delay, an agreement was signed to build ITER at Cadarache in France financed by China, the EU, India, Japan, Russia, South Korea, and the USA. Together these partners represent over half the population of the planet.

Jet and ITER: The two reactors are shown in cut away diagrams. Human figures give the scale. Click to enlarge.

Power will be feed into the ITER plasma in three main ways: by transformer action causing up to 15 million amps to flow in the plasma; by neutral high energy beams of deuterium and tritium fired into the plasma; and by radio frequency energy fed in from antenna patches in the walls to excite resonances in the plasma, Transformer action is very efficient but necessarily pulsed. The other two forms of heating are less efficient but can be continuous. ITER is expected to generate 500MW of fusion energy output, with less than a tenth of that input power (Q>10) and hold that power for 400 seconds. Also it should generate 500MW output for an hour at an input of one fifth the input energy (Q>5). Although it is not stated as an aim, there is the hope that it might achieve what is called ignition where enough of the fusion energy remains in the plasma to keep the reaction going without the need of external input energy (Q = infinity). This will require higher plasma densities than needed with external energy input.

Although there seems to be reasonable confidence that ITER will come at least close to the target in plasma performance this is just the start of the challenge that needs to be met to build a commercial electrical power generating station.

If all goes well ITER will produce the first plasma before the end of 2016, but, in order to speed the development of commercial fusion power, a ‘Fast Track’ strategy is being adopted and in addition to the ITER agreement there has been a bilateral agreement between the EU and Japan called ‘the Broader Approach’. Studies of the DEMO reactor to follow ITER are part of this agreement.

Beyond ITER

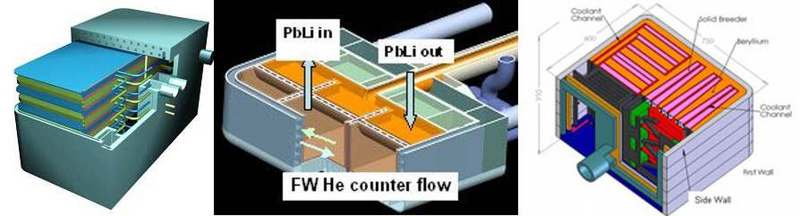

A major hurdle to be jumped is the design the breeding blanket that lines the inside of the reaction chamber and the selection of suitable materials for it. This blanket is required for three purposes; to convert the energy given off by the fusion reaction to heat, to breed more tritium to fuel the reaction and to protect the superconducting coils and chamber wall from neutron irradiation There are a variety of different blanket designs that have been proposed and all of them have some problematical features to them. ITER will not have (certainly not in the early years) a full tritium breeding blanket. Most of the reaction chamber will be lined with a simple cooled neutron and heat absorbing blanket to stop the reactor overheating. There will however be space to fit in and remove small areas of tritium breeding blanket and it is proposed to try, in turn, a variety of different designs.

Montage of some of the proposed Test Blanket Modules. Click to enlarge.

DEMO will have a full breeding blanket to achieve tritium self-sufficiency. The materials used to make the breeding blanket and particularly the first wall facing the plasma need to survive an extremely severe combination of conditions and retain adequate strength and other mechanical properties. The heat flux on the first wall of the blanket will 0.1 to 0.3 MW/m² in ITER and rise to 0.5 MW/m² in DEMO. This DEMO figure is about twice that of a PWR type fission reactor and almost the same as a fast breeder fission reactor, The flux of energetic neutrons means that over about 5 years every atom in the first wall will have been knocked out of place an average of about 3 times for ITER and 50-80 times in DEMO and perhaps twice this in a full scale commercial reactor. Each displacement will shift the atom several tens of crystal lattice spaces from its original site. Atomic transmutations caused by the neutron flux will leave hydrogen and helium embedded in the wall. For DEMO this will result in 500-800 parts per million by atom count (appm) of helium and 2300 to 3600 appm of hydrogen. For ITER it will be acceptable for the blanket to be well cooled to keep it at a fairly low temperature but in a reactor trying to generate electricity by a conventional steam cycle, it is important for high thermal efficiency that steam and hence the blanket coolant are run at as high a temperature as possible. It is expected that the blanket structure will operate at 500°C to 800°C

This combination of requirements mean there is almost no chance of a breeder blanket that can survive the full life of the reactor. After a few years the material properties of the blanket structure will have degraded so much that it will have to be replaced. The inside of the chamber will be far too radioactive for a person to go in there, and so a remote handling arm will have reach in through one of the ports, bending around the central pillar where required, and remove the old blanket, section by section and replace it, section by section with a new blanket disconnecting and reconnecting the pipework (probably by cutting and re-welding) without spillage. The sections are likely to weigh several tonnes. The blanket sections will have to have a fairly tight fit to protect all the chamber wall and coils, but the extreme service conditions mean that they will be significantly distorted at the end of their service life. They must not jam in place or the long articulated arm will not be able to pull them out. There is reasonable confidence that a blanket of some sort came be built to operate for some length of time but the economics of a future power station will depend heavily on how hot the blanket can run and how long it can survive before replacement and how fast it can be replaced. The remote handling arm is a major engineering challenge.

The helium generated in the plasma by the fusion reaction and any other contaminants such as material coming off the structure under severe bombardment, need to be removed from the plasma continuously to allow the reaction to continue. To this end, at the bottom of the reaction chamber there is a divertor structure where the magnetic field is reduced so that a small fraction of the plasma separates and is allowed to cool as it circulates to the point where it recombines to form neutral atoms before colliding with the divertor plates. The gases can then be pumped out and the hydrogen isotopes separated for re-injection. Although the plasma has been cooled before hitting the divertor plates the heat flux on them is still enormous. In the DEMO reactor it will be about 15MW/m². This is about 15% of the energy generated by the fusion reaction and this energy will taken away by a coolant (probably helium) and will be used to generate electricity together with the heat from the blanket. 15MW/m² is about 20% of the power density at the surface of the sun. There is even less chance of the divertor plates lasting the lifetime of the reactor. In fact the lifetime may be as short as two years and these will also need to be replaced by remote handling in sections usually called cassettes.

As well as all the other requirements that must be met by the materials of the blanket and divertor, it is important that the amount of radioactivity induced in them by neutron bombardment is minimised. This precludes the use of some elements that might otherwise be useful for alloying. Theoretically there are no net radioactive materials produced by the main reactions of the reactor since the tritium is recycled. However there will be radioactive waste due to side effects such as neutron activation and tritium embedded in the structure. This will affect both the replaced parts during the reactors life time and the reactor itself when it is decommissioned. Predicting the level of radioactivity of the waste is difficult and impurity levels will have a strong influence. However the radioactivity has been estimated to be 2 orders of magnitude less than a fission reactor and to be short lived, so that after 100 years the level of radiotoxicity will be less than the waste from an equivalent-sized coal fired power station, Keeping the radiotoxicity low will require the tritium recovery and recycling to be achieved with extremely low leakage.

Decay with time of radiotoxicity. Click to enlarge.

The development and testing of materials to meet these very onerous requirements is crucial to the speed of deployment of fusion energy. Because ITER will not produce a plasma for almost 10 years at best and even then will not produce a neutron flux anywhere near as intense as in DEMO and that only intermittently, it has been decided to build a special facility to reproduce, over a small area, the conditions that DEMO and following reactors will have to face. This will be called the International Fusion Materials Irradiation Facility (IFMIF). This will be developed and tested in Japan as part of the Broader Approach agreement, although the final site for the installation has not yet been agreed. At IFMIF two 40MeV linear accelerators will provide 250mA of deuterons which will be targeted on flowing liquid lithium to produce neutrons with an energy spectrum up to 14Mev matching that expected in DEMO. The flux will be sufficient to produce 20 displacements per atom per year in the test samples. Further steps that will be taken to speed up development are that the JET reactor at Culham will have its present carbon chamber lining converted back to a metal wall to provide test data on this material for ITER and DEMO and the JT-60 tokamak will upgraded to have superconducting coils and to act as a satellite control for ITER. A smaller UK Tokamak MAST, which has a toroidal plasma aspect ratio squeezed so tight that it is like a spherical apple with the core cut out, will also provide input to the DEMO design.

Dr. Briscoe summarised the challenges ahead with the following table:

| ITEM | Existing Devices |

ITER | IFMIF | DEMO Phase 1 |

DEMO Phase 2 |

Power Plant |

|---|---|---|---|---|---|---|

| plasma disruption avoidance | 2 | 3 | C | R | R | |

| steady-state operation | 1 | 3 | 3 | r | r | |

| divertor performance | 2 | 3 | R | R | R | |

| burning plasma at Q>10 | 3 | R | R | R | ||

| power plant plasma performance | 1 | 3 | C | R | R | |

| tritium self-sufficiency | 1 | 3 | R | R | ||

| materials characterisation | 3 | R | R | R | ||

| plasma-facing surface lifetime | 1 | 2 | 2 | 3 | R | |

| facing wall/ blanket/ divertor materials lifetime | 1 | 2 | 2 | 3 | R | |

| facing wall/ blanket components lifetime | 1 | 1 | 1 | 3 | R | |

| neutral beam/radio frequency heating systems performance | 1 | 3 | R | R | R | |

| electricity generation at high availability | 1 | 3 | R | |||

| superconducting machine | 2 | 3 | R | R | R | |

| tritium issues | 1 | 3 | R | R | R | |

| remote handling | 2 | 3 | R | R | R |

KEY

| 1 | Will help to resolve the issue |

| 2 | May resolve the issue |

| 3 | Should resolve the issue |

| C | Confirmation of resolution needed |

| r | Solution is desirable |

| R | Solution is a requirement |

Timetable

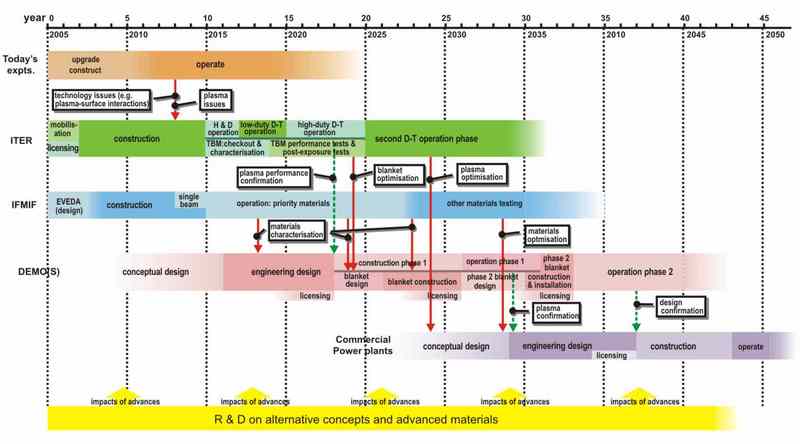

A timetable has been proposed for the overlapping development of the various proposed devices. It assumes that the only obstacles to its implementation are technical ones and comes with many caveats, but it sees the first commercial power station operational in 2048. Even if this very compressed time table is met, it does not signify the widespread availability of fusion power. There is a limit to the rate at which the number fusion power stations can be multiplied set by the supplies of tritium.

The Fast Track timetable. Click to enlarge.

Tritium Supplies

The large scale adoption of fusion energy will see tritium used on a scale vastly greater than has ever been seen before. Something like a 220kg per year of tritium will be consumed for every 1GW of continuous electrical generation, assuming that 4GW thermal will generate 2GW electrical of which 1GW will be used to provide all the inputs to the system leaving 1GW of output power. At present world-wide electrical consumption averages to a continuous 1700GW

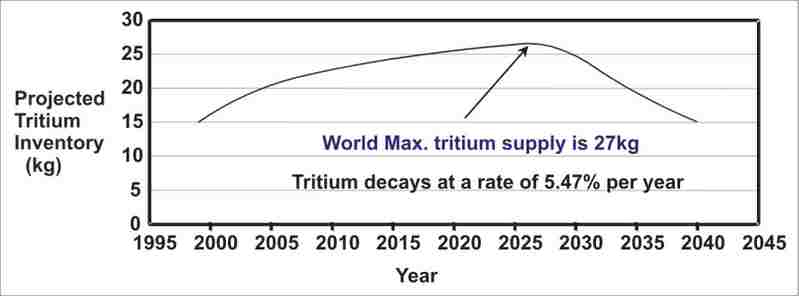

Nearly all the worlds supply of non-military tritium comes from the heavy water used to moderate CANDU reactors and some of these will be closing down in the near future. The supply accumulated over 40 years of operation of CANDU reactors will peak in 2027 at 27kg.

The world’s commercially available supply of tritium before any is removed by fusion programme. Click to enlarge.

Military reactors designed for tritium production produced only a few kilograms a year at a cost of about $200M/kg. Tritium increases the yield thermonuclear warheads (H bombs). It is thought that about 4g is used in each warhead, added in a container just before launch, so that decay of the tritium does not limit the shelf life of the weapon. There have been some hints that the latest warheads being designed will not use tritium. The US had a number of military reactors at its Savannah River site especially designed for tritium production but the last of these was closed down in 1988. It is believed that over 220kg of tritium was produced there over the years but that there was only about 73kg in 1995 which will have now decayed to about 37kg. It is unlikely the US military will release any of this for civil fusion power. One of the speakers said that he believed that at one time the Russians had mentioned the possibility of releasing some of their supply but had no further details. Other civil fission reactors could produce small amounts by placing lithium inside the reactor but some back of envelope calculations that I did for a comment to previous post show that it would take at least 60 tonnes of unenriched uranium to produce 1kg of tritium in a standard reactor and it may be much more. Specially designed accelerators are theoretically capable of producing tritium, and have been considered for military needs, but one to generate a few kilograms per year was estimated to cost $4.8 to 6.1 billion in 1991 prices and would produce vast quantities of radioactive waste.

Since ITER will produce only a tiny proportion of the tritium it uses (at least in the early years) because the experimental test blanket modules will only cover a small area of the chamber, ITER alone will severely deplete the worlds tritium stock. If DEMO is heavily overlapped in time with ITER the tritium supply will be very critical and it will be important to get the full blanket and tritium recovery system going as soon as possible if the programme is not to be delayed.

If fusion reactors are to proliferate, then probably near the start, and certainly after the first few, each new reactor will be relying on the small surplus tritium production from existing reactors to provide the start-up charge of tritium. This is likely to be some tens of kilograms. When I asked him, Dr Briscoe said that it will probably take two-and-a-half to three years from the start of one reactor for it to supply enough surplus tritium to start up another. The estimate he gave was that even if the only obstacles were technical ones it will be 2100 before fusion can supply more than 30% of Europe’s electricity.

The Energy Gap

All but the most dewy-eyed optimists foresee the production of conventional oil severely curtailed by then. If we are to get to 2100 without major economic collapse or using so much coal, tar and oil shale that the carbon dioxide will have risen to disastrous levels, we will have had to have adopted other energy sources on a major scale, as well as substantially cutting our energy consumption and finding some way of replacing liquid hydrocarbons for transport. Fusion will then be competing in a very different environment.

Cost estimates at this stage are obviously uncertain in the extreme but most estimates put the cost of generated electricity as comparable in today’s prices with today’s fossil fuel generated power. Most of the cost is in the capital cost of the plant amortised over the life of the plant. Running, periodic replacements and decommissioning costs coming next with fuel costs less than 1% of these and not likely to rise by depleting the richer ore, as would be the case with very widespread use of thermal fission plants. One estimate puts the capital cost at €14/W electrical for DEMO falling to €4/W for commercial plants in serial production. This link allows you to play with the assumptions and produce an electricity price.

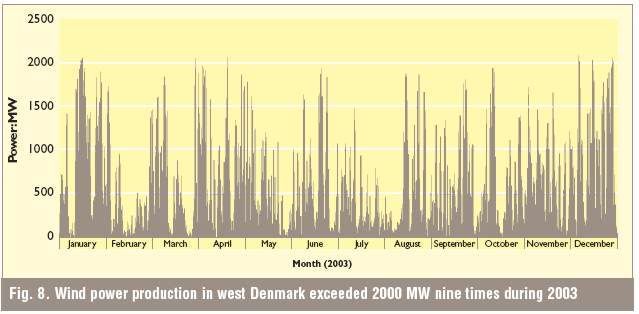

These prices should be compared to today's fission and coal plants at €3 /W and €1.5 /W. respectively for the plant alone. However, the capital costs of coal plants do not include costs to mitigate environmental damage. Wind energy capital costs are now about €1.5/W but rarely have a load factor of more than 30%, whereas fusion plants after initial settling-in could have 85% load factor. Correspondingly more rated wind power plant would need to be installed to meet the same electrical demand. If wind power were to supply more than 30% of the total supply, extensive power storage would be needed and the transmission costs would be greater as many of the turbines would scattered across areas remote from demand.

The carbon dioxide emissions associated with fusion are again very difficult to estimate at this distance, but will also be dominated by that generated in building the reactor and its associated plant and the periodically replaceable parts. The carbon dioxide generated in fuel production and preparation will be constant and low in comparison. Fusion reactors are inherently proof against melt-down or nuclear explosion. There simply is not enough fuel in the reactor at any one time to cause one. Any failure of coolant, magnet supply or other major system will cause the reaction to die in milliseconds. There is not enough heat in the system even without coolant to breach the containment vessel. According to this report even in the worst credible accident with the release of all the tritium on site there would be no need to evacuate anybody beyond the site boundary. They are a very much less tempting a target for attack by violent political or religious groups than thermal fission reactors, still less than fast breeder reactors. If a large part of the energy gap world wide were to be made up by fission reactors using the present system of thermal reactors with once through use of enriched uranium we would rapidly be reduced to the use of ever lower grades of ore requiring more energy input. Some estimates put the point at which there is no energy gain to be 0.02% of uranium and that if nuclear energy were expanded from the present 16% to 50% of electrical generation we would be reduced to using such ores in 50 years. There has been much argument over such estimates but in the long term it is clear that if we are to rely on fission for a major part of our electricity let alone total energy we will need to move to fast breeder reactors or thorium reactors. The long development time of fusion energy may seem disheartening but that of fast breeders is not much better.

In 1946, the year I was born, the Nobel prize winner, Sir George Thomson, working at Imperial College London applied for a patent for using a gas discharge to generate controlled thermonuclear fusion. If the proposed timetable is kept to and if I live to be 102 I may just, in my dotage, see his dream brought to commercial reality. I do not know which of the two is more improbable.

Very thorough and comprehensive overview. Well done!

Consider this a reminder to positively rate this articles (using the icons under the tags in the story title) at reddit, digg, and del.icio.us if you are so inclined. (email me at the eds box if you have questions about this).

Also, don't forget to submit this to your favorite link farms, such as metafilter, stumbleupon, slashdot, fark, boingboing, furl, or any of the others.

I can assure you that the authors appreciate your efforts to get them more readers.

Not this side of a century.

First you have to achieve more than breakeven, but have a plant that runs a heat engine that can produce enough energy to run the confinement aparatus.

Second you have to compete with fission, which has enough fuel to last thousands of years just for light water reactors. In breeder reactor regimes fuel costs are negligable; In spite of being more expensive and difficult to implement than light water reactors, they're still worlds simpler than any conceptual fusion power plant.

Someday I'm sure it will be useful, but in the far far future.

I was talking to Bob Hirsch, famed for his DOE paper looking at peak oil mitigation timeframes, at the ASPO conference last year. He's totally dismissive of fusion power. He should know what he's talking about seeing as his bio includes:

The reasons he gave were cost (will always be more costly than fission), complexity (always more complex than fission) and waste (although fusion doesn't produce the very long lifetime waste products hundreds of years is just as bad as 1000s in commercial terms). Though Nick's report suggests waste quantities are significantly less than fission even over relatively short time scales.

Hirsch is a fan of fission - suggesting over a 1000 years supply of fissionable material, including fast breeders which he said were cheaper and easier than fusion. Whilst not doubting fusion could/will work technically (although he did say that the current research was going in the wrong direction) his problem with is that that it'll just never be completive with fission.

On a related note I've realised a lot of older scientists who are peak oil aware are also supporters of fission as significant part of the solution. My theory for why this is the case is that many of this generation of scientists became aware of fossil fuel depletion issues in the seventies - when the atom was still highly regarded, especially amongst the scientific community. The drawbacks that have become apparent over the last 20 years were not fully recognised then and the first positive impressions of nuclear power stuck!

Thanks to CV & others

Posts like this is why I read TOD each day

Regards/And1

I think that many younger environmentalist do not realize that solutions have been identified for many of the technical problems with fission, such as proliferation, long lived wastes and runaway reactions. Their unwillingness to consider as part of our energy solution might have made sense before we understood the implications of peak oil. But now we know that fission, and especially the potential of Thorium, is just about the only good large scale energy source that will be plentiful for the next 100 years.

Maybe a review similar to the present one is in order, to cover the state of affairs regarding fission. I hear about various sorts of technology, like breeder reactors or thorium reactors or actinide burners. But how far have any of these technologies been pushed - are there working prototypes? What barriers remain to profitable use?

What always interests me is not a blank reassurance like "That problem has been solved," but rather a survey of the current research frontier. No problem is ever solved so finally that somebody somewhere isn't trying to find a better solution. For example, the waste problem. I hear some really stupid comments from folks that do not understand that the problem is rarely the danger from standing next to a radioactive source - after all, shielding is cheap. The problem is, what happens when the radioactive material gets into the air or water or soil and from there enters a person's body. Futhermore, radioactivity tends to corrode whatever material is used to contain the waste, so building a containment vessel that can last tens of thousands of years is much harder than it would be if the contents were not radioactive.

Some folks are apparently reassured by opaque promises that all the problems have been solved, or will soon be solved, or will be solved anyway by the fantastic technology that our great grandchildren will surely have developed to fix the problems we are leaving to them. I find it much more informative to learn about the current research frontier. There are surely folks around who are working to design better containment vessels for radioactive waste. What sorts of issues are they struggling with? If I know where the boundaries are, I can get a good idea of the size of the country.

Weapons proliferation is hardly a technical problem. The idea that such a problem can be "solved" is quite strange. The USA seems to be moving away from an approach of international cooperation to one of strong arm domination. For example, the recent treaty with India is quite strange. It sure looks like it is OK to develop nuclear weapons as long as you make a deal with the USA like maybe don't build a gas pipeline to Iran, or what was that deal all about anyway. "The dominant player stays strong enough to be able to impose its will on all others" - is that the kind of solution you envision?

But the problem gets really thick. If the current direction of the USA continues, where government and industry can use secret police and martial law to concentrate power and profit, then it seems unlikely that any very effective solutions for problems like waste management will get implemented. We have here a classical recipe for corruption. There are always lots of opportunities to increase profits by cutting corners and sacrificing safety.

So the big pattern I see developing is:

danger of weapons proliferation -> concentration of power -> cutting corners on safety

I would really like to see a review of the state of affairs with fission technology that addresses the issues at least at this depth.

Another issue I rarely see addressed in the realm of problems with nuclear power is the issue of mining. If fission power is hugely ramped up, mining waste and pollution issues will become correspondingly huge. As in many other such issues, there are sensible approaches that can mitigate the problems. The big question is, will these sensible approaches be implemented? Our poor past record with other energy sources, primarily those that require actual mining such as coal and tar sands, is not at all encouraging.

Not a lot of mining needed. The amount of Uranium it would take to power the country for a year would fit into a few semi trailers. Even with low grade ore, that's not a lot. Of course, without breeders, multiply that by a hudred or so, but it still isn't much, maybe one medium sized mine or so. Certainly nothing like the scale of our mining for almost any other mineral imaginable (copper, cobalt, coal, you name it).

I read some time ago about swedish trials for containing nuclear waste, IIRC they were using layers of stainless steel with a copper layer outmost, burying it a few hundred meters deep in granite in an area not prone to earthquakes. Each copper container would have it's own niche cut out in the granite surronded by a layer of clay that swells in contact with water and seals the container from groundwater, bentonite I believe has that quality. More I can't remember.

I wouldn't be surprised if Magnus Redin knew more about this particular project :)

Bait taken. :)

The containers will have an inner structure of cast iron with a bolted on lid and channels where dried used fuel elements and other highly active core components will be inserted. This iron structure provides mechanical strenght.

The inner cast iron structure will then be inserted in a 50 mm thick copper shell and a lid is friction stir welded to hermetically seal the container.

The containers are then to be stored embedded in bentonite clay that indeed swells when wet at a depth of about 500 m in crystaline bedrock. They will either be stored individually in vertical holes bored into the floor of a tunnel or several in a horisontal hole drilled between two tunnels. The later method requiers excavation of a smaller rock volume.

There has been geological research for this for about 20 years, the most intresting find is probably microbiological activity in the rock cracs.

Production methods for the inserts and copper shells have been develped, friction stir welding were better then electron beam welding. Copper forgings of this size where something new. Capsule handling have been tested and capsules with simulated decay heat stored and retrieved. A plant for filling the containers is being designed, I dont know if the design is finished.

All of the research, the interrim used fuel storage and so on is paid by fund filled by a small fee on every kWh produced. The same fund will also cover the dismantling of the reactors when they are worn out.

The research and the solution is shared with Finland who has the same kind of bedrock.

Here is a link to an official page with lots of information:

http://www.skb.se/default2____16775.aspx

The final site for the storage is decided in competition between two municipialities. Its expected that final storage will commence in 2018, this means that all the major investments in facilities will be done while the powerplants are running wich I find wise if world finance should burp. A cute bonus idea is to build a railroad with the excavated rock if it is built close to Oskarshamn.

I find this solution good enough for me, its probably overengineered.

thanks for confirming most of what I said, and for the swift reply. 500 m is pretty deep, It does seem like this scheme will keep the material safe for perhaps severel glaciations and interglacials, that is if Scandinavia will be glaciated again before the nuclides decay, who knows if the cycle is broken. I will now go back to reading "The prize".

I expect that far before the end of this century, all what we now call "nuclear waste" will be taken out and recycled. The actinides will be burnt in specially designed reactors and the remaining fissile material will be used and enriched, and only the small fraction of remaining undecayed isotopes will be returned to the storages.

And of course our kids will be amazed at the stupidity of their parents and grandparents, doing what they do now...

I expect that far before the end of this century, all what we now call "nuclear waste" will be taken out and recycled.

I beg to differ. Reprocessing of this kind has proven to be both unnecessary and uneconomical. Even burying spent fuel is not the most economical approach; it's cheaper to just seal the stuff in armored casks and guard them.

About the mining: a Japanese group, some years ago, came up with a polyamidoxime polymer (obtained basically by treating ordinary acrylic polymer with hydroxylamine in hot methanol). This polymer selectively adsorbs uranium from seawater. Suspended in the ocean in a natural current, it adsorbs 1% of its weight in uranium over a period of months, which is not bad when you consider the concentration of uranium in the water is around 3 ppb. It can then be washed with dilute acid to liberate the uranium and reused.

The group estimated the cost of the uranium obtained to be a few times the current spot market price. There would be no mining waste, since the uranium is already liberated in the enviroment, as are all the decay products like radium and radon. At their estimated cost, reprocessing and construction of breeder reactors could be delayed for centuries, even if the world goes over to mostly nuclear energy as its primary energy source.

Seawater uranium extraction deserves more attention than it has been receiving, since it could render some other large government energy research expenditures (like breeder reactors, advanced nuclear fuel cycles, or DT fusion) superfluous for the forseeable future. The primary cost of seawate extraction is the capital cost of the support structure for the adsorbant, so combining this with offshore wind might be a good idea (they could share structural elements).

This has only been market tested with aqueous methods which certainly arent low on capitial and labor and are sort of designed for plutonium extraction. Of course its been more expensive than it will ever be worth. I fully expect that utilizing pyroprocessing methods we'll at least do uranium and fission product extraction sometime this century, as long as we avoid the trap of trying to do MOX fuel nonsense. Now maybe the actinides can be burnt someday in a fast neutron reactor of some sort for profit or maybe they cant, but there is potential profit to be made with non-aqueous methods on the unburnt uranium, xenon, and fission platenoids.

And then we cant discount the political machines that make unnecissary and uneconomical things happen anyways.

And seawater uranium extraction doesnt deserve any attention at all because we'll have so much uranium from more conventional ores for it to ever compete.

I continue to disagree. As it stands right now, reprocessing would be uneconomical even if it were free. The plutonium has negative value, costing more to fabricate into fuel elements than it saves in enriched uranium. This will be true of any reactor with Pu in the fuel elements, since the cost driver (the intense alpha activity of the Pu) will be the same.

I consider homogenous reactor systems, like molten salt reactors, to be nonstarters for practical reasons. No reactor operator wants a reactor in which the entire primary loop is intensely radioactive. Nor do they want reactors that have to include sophisticated chemical processing equipment for online reprocessing.

And seawater uranium extraction doesnt deserve any attention at all because we'll have so much uranium from more conventional ores for it to ever compete.

If so, that would be another reason to not go with reprocessing or breeding.

Where did I talk about Pu?. As it stands, reprocessing just the uranium would be valuable, along with fission platenioids, xenon, and other marketable fission products. Dump the transuranic actinides seperately.

Its sure a seperate business model from LWRs. But the benifits of no fuel fabrication, low fissile load, and extremely small waste stream are there. And while fuel costs are a small component of the cost of nuclear power, they aren't negligable.

Given that MSRs have never been market tested, suggesting that they're a nonstarter because of a different business model is a bit premature.

I am glad you are open to a debate. Waste is less of a problem if you burn up all the long lived waste so that what remains has a half live of only a few hundred years. That technology has been proven. Proliferation is partially a technical problem in that some reactors and fuel cycles create more material that can be made into bombs than others. If the result mixes fissionable and non fissionable isotopes in a ratio that is not weapons grade, then the proliferator needs to build an istope separation process, which is extremely expensive and difficult. And regarding mining waste, the volume of fission fuel is very small comparded to, say, oil sands.

I am not saying that the problems have all been eliminated but that we have learned an enormous amount in some 60 years of experience. In peak oil and gas, we face a problem of enormous magnitude. Wind and solar are potentially good energy sources but they are very diffuse and intermittent. We will need to exploit every resource that we can find but we still may not avoid a catastrophe.

I would like to know what kind of proof you are talking about. I have done a little bit of googling and only turned up speculative designs.

There are two issues of course:

1) Given some complex mix of chemicals in various isotopes, one can probably devise a system to separate out the various components and then use neutron beams of the right energy or whatever to induce nuclear transformations.

2) Is there a way to do something like this but in a cost-effective way?

So I would really like to hear about working systems to eliminate long-life radioactive products from spent nuclear fuel.

Really, "proof" is a mathematical concept. It has some relevance to something like physics, less so to engineering, and almost none in the real world. A system that seems to be working on one day can turn out to be a miserable failure the next.

Sure you can do that with fast neutron incinerator reactors. A liquid chloride fast neutron reactor fed actinides and other transuranics can breed thorium into U233 for liquid fluoride reactors, or it can just dump the neutron surplus into conversion of long lived fission products into stable isotopes.

A better question though is why bother? These things are pretty easily contained and monitored over at least a century, and one can very reasonably assume we'll have better techniques for managing waste by then. Chemical waste is toxic forever, but we dont have giant programs to convert lead into iron.

We'll do it if it makes sense, and if not, just stick it in an empty lot.

You haven't looked very hard.

Take fuel rods, melt them down, mix with liquid salt. Put in an electrode, run current, pull out a giant lump of metal. This is all the actinides. Melt down, make new fuel rods, you're done. Pour the salt into a drum, seal it, come back in 300 years and it's not dangerous anymore. Simple, easy, hardly the rocket science that the eco-dweebs would have you believe.

The only complication is that after you do this a few times, the resulting fuel needs to go into a fast breeder reactor, because thermal neutrons won't fission some of the heavy actinides. That being said, breeders are an old hat, the French had one going in the 1970s (superphenix), but it closed in 1995. That is hardly the only breeder reactor around.

In any case, it was eventually closed because eco-terrorists hated it (even fired rockets at it), god forbid we get a reliable and non-polluting source of power, don't you know, and because it was pretty costly. It didn't make much sense in an age of cheap uranium, but it was hardly a technical difficulty even using 1970s technology.

In any case, these various technologies are stupidly simple, a fission reactor is little more than a big pile of metal with some pipes for heating water, and they've been proven a hundred times over through the course of the last 40 years or so.

The issue with this is that there isn't much research to do with fission. Most of the research is related to making fuel rods that can last a really long time between changes, as that saves money and is a pretty hard problem. Beyond that, reactors were easy even in 1960, it's just a very simple technology. All the current reactor designs floated around, there's not the slightest chance that they won't work exactly as expected, modulo cost overruns in the construction, and excessive maintainance costs. There just isn't much research to do here, all the problems are solved, and none of them were hard to begin with.

1) Waste management. The industry doesn't really care much, because handling any volume of waste just isn't that expensive, but if you care, google pyrometalurgy for a better way to do it. There's not the slightest chance this won't work exactly as advertized, it's been tested and everything. The only question is, how cheap will it be, and why bother. I think we should bother, but I'm not calling the shots. It makes the waste safe in about 300 years (as compared to 100 or 200 for a fusion reactor). Most reprocessing has historically been done with PUREX, as that's what the military used to make weapons, so why not use the same process? Once again, it works, and no civilian systems are terribly concerned with reprocessing waste, so why bother.

2) Breeder reactors. This was an old hat in the 1960s, look up superphenix. They cost more though, so people never bothered, because Uranium is cheap. Some were built, and run for decades, the environmentalists always hated them, they were generally shut down because Uranium is cheap, so what's the point.

3) Proliferation. The US is already a nuclear power, I can't fathom how us using more nuclear power allows bangladesh to get nukes.

The nuclear industry had a decades long record of arrogance, overconfidence, lies and secrecy. Not to mention a series of very serious disasters which were 'impossible' (Windscale, Chernobyl, TMI) and a legacy of radioactive waste and sites which will take decades or centuries to deal with (most notably on the military side, of course).

Which is not to say that big improvements haven't been made, eg in US reactor operation and safety post the 1979 Three Mile Island disaster. Or that in an age where global warming is the greatest threat, that it doesn't have a role.

It was an industry conceived in an age of great techno-optimism, when it was inconceivable that human action could permanently degrade the environment, and the opportunities for human progress were endless. Massive government subsidies were poured in to bring the industry to life.

Today we are vastly more cognisant of the risks of unintended consequences, of the biases of large government agencies and government-industrial complexes, and of the tendencies towards secrecy and denial of large bureaucracies.

In a curious mirror of global warming, we don't trust authorities as much any more. Just as people are not willing to trust any number of authoritative scientists on the reality of global warming, so they are not willing to trust scientists and engineers on the safety and efficacy of nuclear power.

Call it a post modern age. We no longer believe that there are truths, separate from their societal and social context and meanings. It is argued that global warming is a giant conspiracy by scientists to enhance their own position and funding, and weaken the United States. That is an argument entirely founded on views about how society works as a social and political construct.

This, above all, a group of French philosophers has left us with as a view of the world.

I have always felt that it was ridiculous to place power metals in a deep mine and predict that it won't be touched for geologic ages. Its no different than locking up the Pharaohs gold in a Pyramid. For eternity.

The real problem with actinide stores is that some men will eventually purposely mine it to obtain the power metals.

Reprocessing and burning up the actinides is the only way to insure that there is nothing in the waste depository that anyone would want.

Although today I understand that archaeologists actually open the ancient cesspools to explore the diets by inspecting the fecal remains. So to say even that won't happen is still unpredictable.

Bu the the attraction of going after power fissionables will ensure that man WILL disturb the waste depositary.

I don't think we will continue to use fission long term, after Fusion becomes practical, but some facilities will eventually be created to specifically consume the power actinides and remove them forever.

I used to be very much against fission reactors because of safety concerns. I wouldn't make that argument any longer because of the safety record of fission reactors. If we vastly expanded their use, there would be very serious accidents, but there is not a single technology which is perfectly safe and has a clean environmental bill. So it becomes a judgement call. That is a call we have to make collectively.

I do see a very problematic future for fission because of local politics, though, after all, who in their right mind wants one of these next door, even if the risks are small and understood? Moreover, federal politics has shown to be incapable of supplying fission technology with the needed regulatory framework and long term infrastructure for re-processing and fuel re-cycling for breeders. Thorium reactors might be a way out, but they seem to have a couple of decades of R&D ahead, still.

And finally... until the total cost of fission including waste storage is known, we can only assume that electricity from reactors is not significantly cheaper than electricity from renewables. But this would probably change if waste heat recycling (for industrial processes and heating) could be included into the equation. I don't know how serious R&D efforts in the US are to look into that, though.

The cost of waste storage is nearly all upfront. The rest is nearly zero because of discounting; Now the cost of geologic repositories might be huge, but they're political objects that are entirely unnecissary. Just store the waste in an empty lot for the next century and revisit the issue again. There will probably even be a market for it with all the unused fuel and fission platenoids in it.

Right. Let your great-grandkids clean up after you.

I want my MTV!

Sure, just like we're dealing with the massive social ills caused by the lead pipes of the Roman empire.

Right. Let your great-grandkids clean up after you.

Right, do unnecessary work now and let your great-grandkids clean up the incurred public debt.

The 'economic pollution' of unnecessary government spending is a worse problem than the nominal cost imposed on future generations by interim storage of spent nuclear fuel.

Conventional reactors can also be retrofitted to breed/convert thorium to uranium. I worked on the successful thorium light water breeder reactor project back in the late 1970's. The Canadian CANDU reactor also offers lots of possibilities for using thorium and for minimizing long-lived nuclear waste. We have the technology. We just need to have realistic economic incentives against generating both CO2 from fossil fuels and long lived nuclear waste from conventional reactors. See www.thoriumpower.com for some more info on one thorium fuel cycle approach.

The problem is with any breeding configuration in solid fuel reactors that implies an entirely seperate reprocessing and fuel fabrication regime that has to deal with fairly hot fuel elements, unlike fresh uranium before it gets stuck in the reactor. This vastly magnifies cost and probably isnt worth it. We're more likely to reap benefits from fluid fuel reactors or once through light water reactors.

Now reprocessing with molten salts might offer some advantages, but all large scale reprocessing plants today use aqueous methods which are just in every way horrible. They are ideal for doing plutonium extraction for weapons production, but not so much for reactor fuel.

One good thing with small and medium size liquid fuel reactors that run at a high temperature is that they can deliver both thermally manufactured hydrogen and hot process steam to oil refineries. That ought to be a good way to make heavy oil and old refinery infrastructure last longer.

The problem is with any breeding configuration in solid fuel reactors that implies an entirely seperate reprocessing and fuel fabrication

This omits the possibility of a system that could breed and consume fuel in-situ, without reprocessing. This would require fuel elements capable of achieving high burnup, but metal fuel elements have that property.

The problem would be keeping the reactivity within bounds as the fuel evolved. This could be done either by careful and frequent rearrangement of discrete fuel elements (a thorium-uranium near-breeding scheme in CANDU did this) or by use of an accelerator-driven reactor that can continue to operate as k declines (just turn up the accelerator; time share the beam between multiple cores so the accelerator's capacity remains fully utilized.)

I support fusion research even given the low probability of success because a success would be very important.

However, I think that equal level of commitment ought to be given to accelerator-based fission or other advanced fission cycles, because the fundamental physics is easier and there is less major technological development necessary.

Accelerator based fission (and other fast neutron schemes) can burn up the long-lived radioactive actinides which are the problem for waste disposal.

Accelerator based fission also has no meltdown/chain-reaction problem, similarly to fusion, removing the external inputs stops the reaction.

The fundmental advantage of fission over fusion has persisted since the beginning.

In the fission reaction you shoot a neutral particle at the nucleus. There is no interaction until it gets close enough for the strong force.

In a fusion reaction you have to squeeze two oppositely charged, and hence repelling, particles very closely together. If you just slightly miss---which almost all collisions do---you end up increasing chaos and driving towards thermodynamic equilibrium thereby reducing the reaction rate.

We knew about fusion reactions in the early 1930's and fission reactions in the late 1930's. There was a major industrial scale fission plant a few years later and full commercial operation just a little while after that.

We're not even close to Hanford with fusion.

I think advanced, well designed fission reactors are the way to go.

The only thing accelerator based fission buys you is extra control on criticality excursions. A 'meltdown' is still possible as much of the heat present is heat from decay chains. A better thing to do is just design a safe critical reactor for the hard epithermal spectrum for actinide incineration, and liquid chloride reactors are the way to go with that.

Or you can use modern light water reactors and stick the waste in casks. Its not like we're short of space for them.

The accelerator driven thorium reactor proposed by Carlo Rubbia, Director General of CERN 1989-3 is safe against runaway. In is inherently sub-critical as only one spare neutron is produced per fission event and even a tiny loss of neutrons, of which there must be some, will lead to the reaction dying away almost instantly with no neutron input. Only the input from the accelerator keeps the reaction going and the reaction rate cannot be faster than that determined by the maximum accelerator output and the fixed multiplication factor. Although there will still be a lot of after-heat from the fission reactions, it is much less than from a normal reactor and his design uses passive convection of vast amounts of liquid lead in a deep chamber dug into the ground. Although it is not impossible to imagine a loss of coolant accident and melt down (a very violent earthquake) with the correct choice of site it is vastly less likely than the already small chance of such an accident in a PWR reactor that requires pumped cooling.

The additional advantages of there being vastly more thorium 232 (almost all of natural thorium) than uranium 235 (0.7% of natural uranium) in the world, the massively lower production of long lived actinides plus the possibility of transmuting them to shorter lived isotopes, the lack of the need for enrichment and the far lower opportunity of diverting material to weapon use, either by the operator or through raids by violent political of religious groups make it an attractive option if we chose the widespread adoption of fission power generation.

So is any critical reactor with passive safety features and negative reactivity coefficients, and you dont have to buy an accelerator with the package. Rubbia is an accelerator guy and sees everything as a nail to be solved with his favorite hammer. Accelerator driven systems are a bad, expensive idea. An interesting one, but they're dumb.

You can use thorium in critical reactors that are far more ecnomical than any ADS.

http://thoriumenergy.blogspot.com/

Careful here, you aren't really talking about the same thing as the parent. The parent is noting that even a sub-critical reactor can melt down, potentially, and he's right. Energy generation doesn't stop when fission stops, but rather it slows gradually over the next few days, much of the energy comes from the rapid radioactive decay of the fission fragments.

In any case, modern reactors are safe. There is a 100% probability that coal will kill at least 300,000 people in the US alone this year and every other. The worst nuclear disaster in history killed maybe 100 people, and no matter how many ways you try to add to that tally, it's still way less than any given year of coal in a fairly large country. Even if you go all out and try to make the nuclear tally in the thousands, or tens of thousands, you aren't going to be able to (with a straight face) claim that it's even on the same order of magnitude as coal, millions per year worldwide. Also, fossil fuels release more radioactivity than even nuclear disasters, to say nothing of lead and mercury.

In any reasonable comparison (number of people killed or sickened, etc...) civilian nuclear power is about the best out there, possibly as safe or safer than some (if not all) renewables. Hydroelectric dams burst, mechanics fall off of windmils, and those solar panels don't just make themselfs you know, Hydrofluoric acid is no fun to be splashed with.

300,000 per year at a death rate of 8.26 per thousand in a population of 300M would mean 8.26% of all deaths are caused by coal. This seems way too high. Also coal at the moment provides more energy than nuclear fission. Fission deaths should include uranium mining which is not without hazard and will increase as ore grades sink and more has to be mined for a given output. This somewhat closes the gap with coal but I agree coal is more dangerous and not free from radioactivity as my graph shows.

As regards melt-down of a thorium accelerator driven reactor, I did accept that melt-down is a remote possibility and specifically mentioned after-heat but in the design I mentioned, all that needs to prevent melt-down is that the 10,000 tonnes of lead stays in the pit. No

playing with the controls, failure of axillary equipment or fracture of piping of containment vessel will lead to a melt-down. None of these would even lead the temperature rising. It is unlikely that a direct hit by an aircraft will do it.

My point is that in a modern design for a critical reactor, you're not going to have any chance of meltdown either. You aren't getting added safety worth the enormous infrastructure cost.

A better thing to do is just design a safe critical reactor for the hard epithermal spectrum for actinide incineration, and liquid chloride reactors are the way to go with that.

Does going to hard spectra for actinide burnup (to reduce half life of wastes) cause additional safety issues?

I thought that was the principal motivation for a spallation system, that you can get both fast and safe and with reasonably robust lack of complicated plumbing/chemical loops.

Anyway, as policy I would advocate actinide-burning extremely safe fission (accelerator as one potential to explore, not exclusively) as a major addition to fusion.

I also believe that existing light water reactors are still much better than coal that we ought to use them regardless of the cost increase, but enough people don't seem to agree. I guess that the nuclear option will have to present huge advantages with answers for nearly all traditional nuclear problems (not just relative superiority to coal) until it is accepted.

Why else the work on fusion if that weren't the approximate consensus?

Yes. You have a much smaller delayed neutron component, so reactivity flux tends to be higher and the reactor is harder to control.

You're trading one set of complications for much more complication. That accelerator wont be cheap. In any case, the accelerator system will either use a solid fuel system where you have to have a reprocessing regime for fuel fabrication (not good, much more complicated) or you'll be using some fluid fuel liquid chloride regime allready that has all the plumbing you seek to avoid.

But in a fluid fuel reactor, even a criticality excursion isn't _that_ bad. the fuel can get real hot, but the void coefficient is negative, as is the temperature coefficient, so it cant go on a runaway excursion... the worst that happens is bubbles in the fuel and melting of the freeze plug and the fuel drains into dump tanks, which is an easy fix in a well designed fluid fuel reactor.

Critical thermal thorium reactors with liquid fluoride fuels are my prefered next generation reactors, they dont produce long lived actinides in the waste stream, and the actinide component of the fuel is small enough that you can just burn it in the reactor. I think we should start building hundreds of mature LWRs today, and fast track liquid fluoride reactors for the next generation.

Because fusion is cool. It doesnt make it a good solution to energy problems, but it is very cool enineering. So was the apollo program and that was just as useless.

So a summery of this is : fusion is hot, its almost working (the next generation of plant will probably generate power) but the technical startup costs (especially tritium) will prevent large scale adoption for the next 100 years, and as such there is no chance to save us from PO?

anyway, a well written article explaining the pros and cons of the current state of the industry more clearly than I've read in a while.

A great summary with some extra insights for me, thank you!

There is another obstacle, which I would call socio-technical:

A fusion plant of the proposed design relies on a very, very high level of industrial infrastructure and knowledge.

To name just one example: The production of the required tons of super-pure materials for the blanket currently poses huge problems even for the most advanced nations in the world.

So it is not just a matter of devising and licensing the proper blueprints for everyone to be able to build such a plant. The necessary level of sophistication and therefore complexity of the supporting infrastructure and its management processes might well turn out to be beyond the achievable, particularly in societies under serious energy depletion pressure.

Look at how difficult it is for developing countries to even build a "simple" nuclear fission reactor - usually, they cannot do this on their own, and some cannot do it at all, even though all principles and even medium-scale blueprints must be considered "public domain" by now.

Re spending money on fusion: Sure, there might be some usable output eventually. But as with all investments, you have to look at the opportunity costs: How does the investment in fusion compare with other alternative energy investments in terms of effectiveness, timeline, amortisation etc.

If you took the money and financed low-tech combined heat and power plants with district heating where population density is high enough (all cities!), or large-scale solar thermal district heating or at least block heating, the numbers would make much more sense and the solutions can be deployed today.

Tech people often have the tendency to dismiss the pragmatic, alledgedly imperfect solution and rather support the research for an imagined far-off wunderwaffe as the solution to all problems. I think this is a classic fallacy.

Cheers,

Davidyson

These are research and development efforts not technology that can be fielded today.

As such there are no guarantee that it will work out but if we dont try we wont get to know what we can know. Since the effort is at the edge of what our technology can handle a failure at least hones our collective skills in material science, etc.

It would be dumb to bet all the development budgets on fusion and crazy to use the "deploy todays technology budgets" but if we leave no money for this kind of research we lower the chance that things will be alot better in the medium term future. The same goes for breeder reactor research, novel solar cell research, etc.

And we should definately go ahead with this if the doomers are right and things will go to hell regardless of what we do. Then future generations can say that during the golden age they lit and held a sun for a short while before loosing it. Not a bad pyramid to leave as heritage, done once it might someday be repeated in a better way.

Well, money can only be spent once. If the cost of fusion research would just be a couple of millions per year, I would agree with you. But completely in sync with the law of diminishing returns, the intellectual and financial effort is huge.

It is a bit like playing the lottery - to have any sizable probability of winning, you have to spend a big lot of money and still have no guarantee.

It is also a bit like dismissing a readily available and effective, but painful cure for some short-term deadly illness because you want to put the money into research for a less painful cure that might be developed one day. Oh, and you also withdraw the doctors from the sick, because they are needed to do the research.

Sorry, but I can't follow you at all.

Davidyson

My standard arguing is that we should do what we can do right now since we can not depend on long term break thrus, good things will probably happen but we can not be sure about what and when.

Unfortunately I dont use to insert a "mostly" since we also should do research and development for the long term and to get to know more about how the pysical world works. I would be happy to for instance see a Hubble 2 fly and having us find out more about things distant enough in space and time to never ever be of any utility for energy or feeding a physical need. Thats an example of very expensive science, the kind of projects we only can do a few dozen of at the same time and most of them are done with international cooperation.

Doctors are withdrawn from the sick all the time to do research. But it would be smarter to minimize the growing paperwork and not the research. When arguing about resources for expensive research, energy or other, versus good ideas for energy investments I would rather argue about good ideas for energy investments versus wastefull lifestyle habits.

But this is slightly religious for me. What is the point with resource abundance if we dont gather new knowledge and ideas? If I could have ten million dollars or lots of insights in how the world works I would not hesitate in my decision. If for instance USA would dismantle all research and creation of new culture but managed to keep a large middle class happily fat while doing zero cost recycling of old culture I would regard your existance as pointless from my selfish point of view, ok not completely pointless since I like to see happy people. ;-)

I am also fascinated by the achievements of modern science, like deep-space observation etc. - but my fascination tends to be curbed once I think of all the lost opportunities to spend the money on sustainability. Like my fascination with modern weapon systems like, say, the Eurofighter gets crushed as soon as I realise it's all for killing people.

Spending money on deep space science or - if you will - on fusion research which might bear fruit in 50-100 years while we are facing a multicrisis threatening the very existance of humankind on any meaningful level, is like fiddling while Rome burns or like the band playing on while the Titanic sinks.

Until we do reach a sustainable equilibrium, I consider the sums spent on trying to find out how the physical world works on sub-particle levels or in its cosmological context rather obscene.

Re your spiritual point: I consider myself an atheist and have been described as a rather too intellectual and knowledge-oriented person (as opposed to a more empathic, intuitive person). Nontheless, I feel that the meaning of life can only be true happiness - not in the consumerism/incentives/competition sense but more in the fundamental de Mello sense: being connected to reality, being aware and feeling love for the world and the people around you. This type of happiness would almost automatically lead to a more sustainable world.

Gathering knowledge is fine, as long as it does not preclude solutions for the existential problems of humankind.

Cheers,

Davidyson

I am perhaps not as nice a person as you. I use some of my time to think about how weapons could be more efficient and appreciate that what is left of the Swedish military after the cold war draw down have very good weapons and I figure some of them could be even better. But I regard violence as a last resort when everything else fails. The biggest problem with violence is that it works for creating and maintaining power, if violence never were a solution it would not be a problem. But it is of course not a solution for having a nice time, use of violence shatters the pleasant and relaxed naivety, or rather to not feel hunted, that I and manny others enjoy.

Btw, scrap the Eurofighter and buy less expensive Gripen instead. ;-)

Perhaps more serious, the difference in R&D productivity between different countries shows that we probably can do more globally. I can find examples in the weapons area but why not take cars instead. Tiny Volvo cars owned by Ford and tiny Saab owned by GM so far manages to be development efficient enough to not be dismantled by the financially hurting US owners but rather used as technology development centers.

That knowledge gathering, even expensive knowledge gathering, should preclude solutions for physical and existensial problems of humankind is odd when we live in the era of maximum oil production and maximum fossil fuel production. We have never ever had so much physical resources available and now we physically can make manny investments in parallell. The big resource problem is probably to ballance investments versus consumption, double ice cream today or small ice creams for a very long time and the possibility of developing new tastes?

It is of course true that we live on only on earth and a lot of things are going bad but human culture is not homogenous in its strife and priorities. We will probably never have a global priortization list and that is ok since we need competition and multiple solutions to get around the problem that one human or an organization of humans have a limited capacity for knowledge and good decision making. But things will be bad for a lot of people if manny people dont think about long term problems and solutions for them.

This line of thouyght is going out in the blue, I should probably be wise to leave it and get on with my life. :-/

I do appreciate the exchange!

(But have to go home now... ;-))

Cheers,

Davidyson

PS: And yes, I think I will rather buy the Gripen than the Eurofighter - next time, definitely! :-)

Davidyson,

is wholly egocentric. The first and second worlds may be impacted severely by PO, but there are many peoples who use neither oil nor natural gas and therefore they will not be affected.

That's funny, because even the Third World needs fossil fuels - and in a more existential way. If they loose even a fraction of what they have now (for whatever reason), people will die - not just experience a minor discomfort, like the First and Second World.

Right, there are some indigenous tribes that live without fossil fuels.

But even they will be very much affected by the catastrophic climate change that will be accellerated by the not-so-clean "substitution" coal-to-liquids fuel, for example.

This is one world. All crisis are interlinked.

And even if the argument were egocentric, the "ego" still consists of about half this planet's population!

Cheers,

Davidyson